Hugging Face

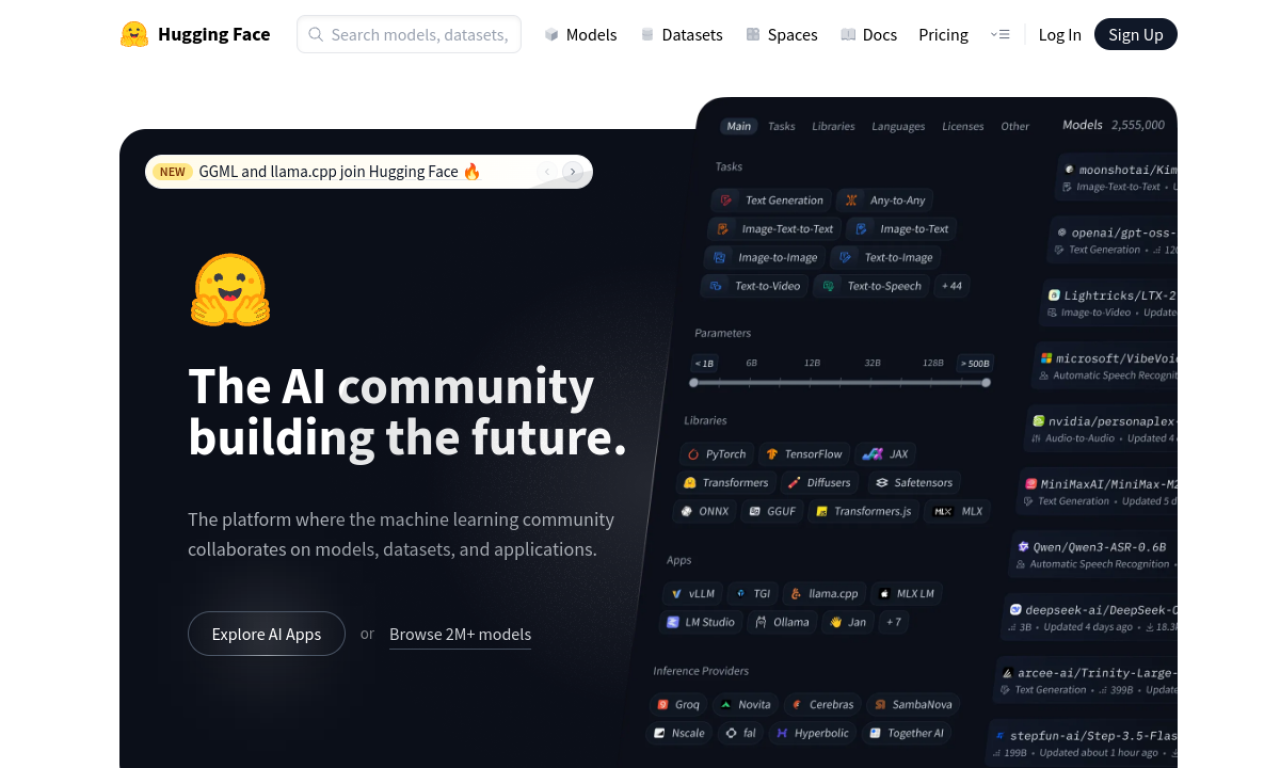

FreemiumThe open-source AI community hub. Host, share, and deploy machine learning models, datasets, and applications with a massive community ecosystem.

What does this tool do?

Hugging Face is a specialized platform for the machine learning community that functions as a centralized hub for discovering, sharing, and collaborating on AI models, datasets, and applications. The platform hosts over 2 million models and 500,000+ datasets, making it the de facto repository for open-source ML work. It provides infrastructure for hosting these assets alongside inference APIs that unify access to 45,000+ models from multiple providers through a single endpoint. The platform also enables developers to build and deploy interactive AI applications through 'Spaces'—containerized applications that can run on free or paid GPU compute. Beyond hosting, Hugging Face offers enterprise solutions with SSO, audit logs, and resource management, plus a pricing tier starting at $0.60/hour for GPU inference endpoints.

AI analysis from Feb 23, 2026

Key Features

- Model Hub with 2M+ pre-trained models across text, image, video, audio, and 3D with version control and model cards

- Datasets repository with 500k+ public datasets and tools for dataset versioning and exploration

- Spaces: containerized application hosting with automatic Dockerfile generation and optional GPU acceleration

- Inference API providing unified access to 45,000+ models from multiple providers via single API endpoint

- HuggingChat: conversational AI interface and reasoning model integration (Omni support mentioned)

- Enterprise platform with SSO, audit logs, resource groups, private dataset viewers, and dedicated support

- Open-source libraries (Transformers, Datasets, Diffusers) for local model development and training

Use Cases

- 1ML researchers publishing and version-controlling pre-trained models for reproducibility and community feedback

- 2Data scientists discovering and fine-tuning existing models rather than training from scratch

- 3Developers building AI applications (chatbots, image generators, text-to-speech) without managing inference infrastructure

- 4Companies hosting proprietary models with enterprise-grade security and access controls

- 5Teams creating datasets and benchmarks for collaborative model training and evaluation

- 6Students and hobbyists learning ML by accessing state-of-the-art models and running interactive demos

Pros & Cons

Advantages

- Massive ecosystem with 2M+ models and 500k+ datasets provides unmatched discovery and reuse—eliminates redundant model training

- Low friction deployment: Spaces allow publishing interactive demos without DevOps knowledge, and Inference Endpoints abstract away infrastructure complexity

- Strong community trust and adoption by major organizations (Meta, Google, Amazon, Intel, Microsoft), ensuring model quality and long-term platform stability

- Unified inference API aggregates models from multiple providers, reducing vendor lock-in and simplifying multi-model comparison

- Free tier with public model hosting and basic Spaces compute makes it accessible to individuals and academic research

Limitations

- Learning curve for beginners—navigating 2M+ models requires understanding model cards, task types, and performance metrics; poor discoverability without domain knowledge

- Free tier Spaces compute is limited and slow; production-grade performance requires paid GPU ($0.60+/hour), which adds ongoing costs for continuous services

- Vendor dependency: while models are open-source, hosting and inference infrastructure are proprietary; platform downtime affects deployed applications

- Limited governance tools for free users—no private dataset viewers, audit logs, or resource controls; enterprise features start at $20/user/month, pricing out small teams

- Model quality varies widely—no centralized curation or validation; users must evaluate model reliability themselves, risking production issues with untested models

Pricing Details

Free tier includes unlimited public model/dataset hosting and basic Spaces compute (CPU-only, resource-limited). Paid Spaces GPU instances start at upgrade tier pricing (not specified publicly). Inference Endpoints start at $0.60/hour for GPU compute with no service fees on model access. Enterprise plans start at $20/user/month and include SSO, audit logs, resource groups, and priority support. Exact GPU pricing and Enterprise feature breakdowns require navigating to detailed pricing pages.

Who is this for?

ML engineers and data scientists building production AI systems; research teams publishing models and datasets; indie developers and startups deploying AI applications without DevOps resources; enterprises requiring secure model hosting with compliance controls; students and hobbyists learning ML through interactive models and tutorials.